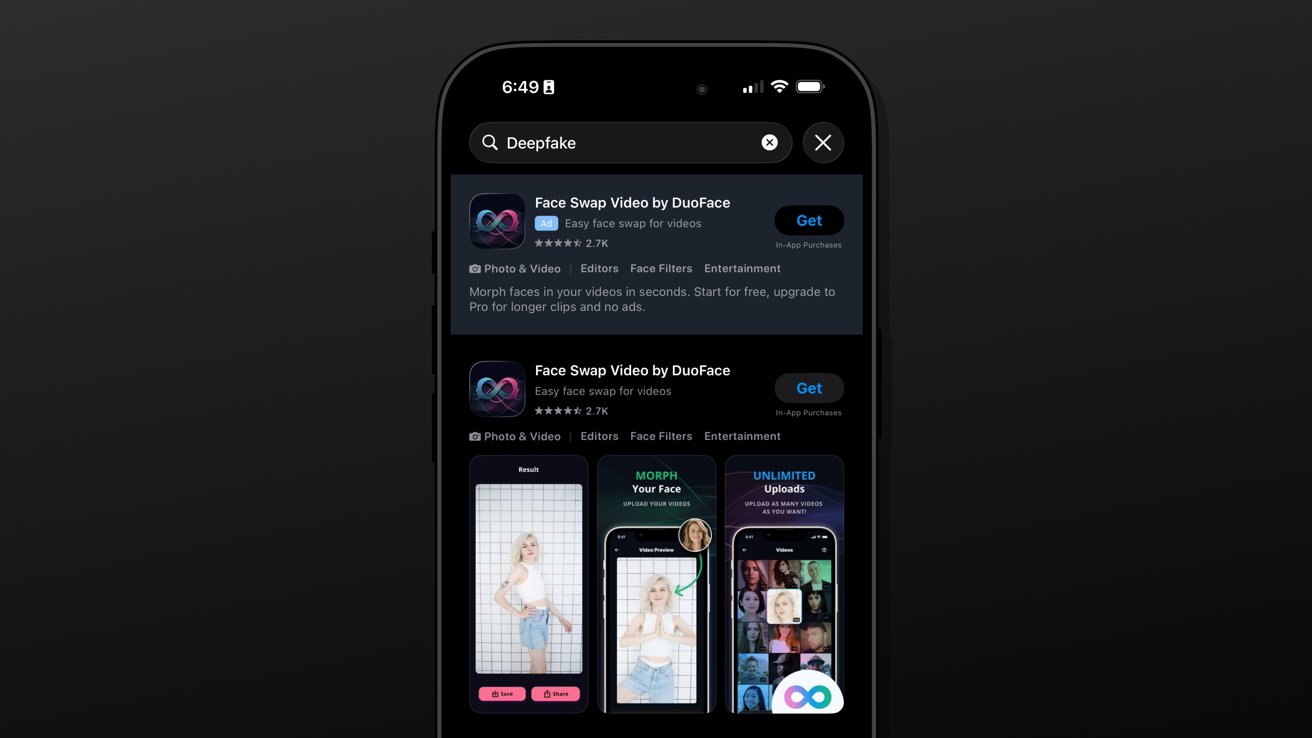

Users looking to create nonconsensual deepfakes of unknowing individuals can simply perform an App Store search. If App Review has a job, it clearly isn’t doing it.

It isn’t just the Grok app that lets users create nonconsensual deepfake nudes and pornography. Apple did remove at least 28 such apps in January, and even threatened xAI with a Grok removal, but that clearly hasn’t been enough.

According to a report from The Tech Transparency Project that was first shared by 9to5Mac, “nudify” apps are appearing in search ads and suggestions in the App Store. Pornography isn’t banned from the App Store if it isn’t the app’s sole purpose, but illegal or harmful products are.

So, you’ll never see a Pornhub app on Apple’s App Store, but apps like X are full of pornographic content. The line that’s being crossed is the ease of generating deepfakes with explicit content.

For example, a face swap app like the one in the featured image could put some other person’s face on a video of a man jumping on a trampoline. While it is an odd tool without much practical application, it’s perfectly allowed.

The problem is in that same app a user can submit a pornographic video and swap the face of the person in that video with a real individual’s face. I wanted to put this research to the test and verify the ease in which an individual could search, download, and use such an app.

Zero guardrails

I performed the search for “Deepfake” and got Face Swap Video by DuoFace. I didn’t have to sign in or pay anything and was able to upload an explicit video I found online and face swap in a person’s face from a stock photo.

There is zero safeguard, no popup suggesting the video might be explicit, nor anything to stop such an action from being taken.

That’s just one example of one app. There are other types of apps that can remove clothing or transform them into sexually explicit chatbots.

The investigation claims to have found 31 nudify apps that were age-rated as suitable for minors.

Search for explicit AI apps and autocomplete might finish the search term for you. There’s also the advertising block at the top of search and suggested apps at the bottom of each app page.

Apple’s App Store Guidelines and App Review are meant to stop such things from happening. It’s one thing to let a couple dozen apps through with hidden nudify capabilities, but another to accept advertising payments.

App Review is broken

Something is broken about this process. If Apple truly wants to be stewards of the App Store without legal intervention, something needs to change.

Apple has been financially gaining from these apps as well. The apps identified in the research have been downloaded 483 million times and have pulled in more than $122 million in lifetime revenue.

The researchers even contacted app developers to ask about their app’s functionality. Some were reportedly surprised at the findings and didn’t know the AI they used would even perform such an action.

They’ve promised to add guardrails to the apps, but it shouldn’t be external researchers discovering these problems. App Review should be testing any app claiming to perform actions on videos and photos for potential nudify functionality.

For now, be aware that app descriptions and age ratings aren’t foolproof. With AI, there are no guarantees.

Source: www.appleinsider.com

Source link